Audio Commons Manual Annotators

One of the challenges in making use of Creative Commons audio content comes from the fact that it is provided by various sources and authors with different backgrounds and levels of expertise. Therefore, the content is often unstructured and not properly annotated, which hinders its efficient retrieval. Intelligently guiding users on the annotation process would allow a reliable, uniform and complete description of the content which will therefore facilitate its sharing. More specifically, within Audio Commons, on of our focus is annotating content with a large set of predefined concepts organised in a hierarchy (what is often called a taxonomy). Therefore, we developped two web-based tools for the manual annotation of audio content, which can be integrated in other projects: The Audio Commons Manual Annotator (AC Manual Annotator) aims at adding missing labels, whereas the Audio Commons Refinement Annotator (AC Refinement Annotator) allows to refine and specify existing labels.

AC Manual Annotator

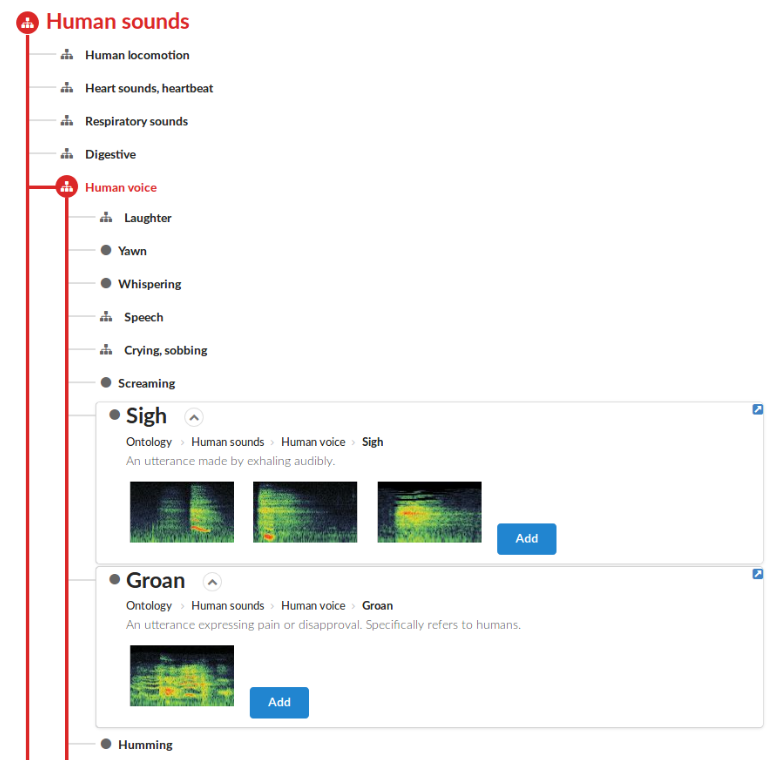

With the AC Manual Annotator, labels can be assigned to an audio clip. The main idea behind this interface is to provide a way to facilitate the quick overview of categories. Moreover, considering the large size of the hierarchical structure in taxonomies like AudioSet, it is important to show the location and context of the categories within the hierarchy. Another design criteria was to allow the comparison of different categories by simultaneously displaying their information. In the proposed interface, a text-based search allows to locate categories in the taxonomy table.

AC Refinement Annotator

The AC Refinement Annotator displays some previously existing labels as rows. The annotator can examine their location in the AudioSet hierarchy as well as their siblings and children categories. By making use of the hierarchy, the main goal of this tool is to aid the annotation process by providing an iterative way of specifying the type or nature of the content.

More information can be found in our 23rd IEEE FRUCT Conference paper.